Machine learning needs data, and sometimes lots of it, especially in the initial training data. Just as people need data input to learn anything, so do machines. The key difference with machines is that the input needs to be digitized.

Another big difference is that machines are designed and built by humans, typically to perform specific tasks, such as driving a car, estimating a home's market value, recommending products, and so on. To a great degree, the purpose of the machine learning product and the data the machine needs to fulfill that purpose drive the design of the machine. The fitting process helps to optimize this design. The human developer needs to choose a statistical model that predicts values as close as possible to the ones observed in the data. This is called fitting model to data.

Why Fit the Model to the Data?

The purpose of fitting the model to the data is to improve the model's accuracy in the task it is designed to perform, which is confirmed by new data. Think of it as the difference between a suit off the rack and a tailored suit. With a suit off the rack, you usually have too much fabric in some areas and not enough in others. A tailored suit, on the other hand, is adjusted to match the contours of the wearer's body. Fitting the model to the data involves making adjustments to the model to optimize the accuracy of the output.

With machine learning, fitting the model involves setting hyperparameters. These are conditions or boundaries, defined by a human. Hyperparameters include the choice and arrangement of machine learning algorithm(s), the number of hidden layers in an artificial neural network, and the selection of different predictors.

The fine-tuning of hyperparameters is a big part of what data scientists do. They build models, run experiments on small datasets, analyze the results, and tweak the hyperparameters to get more accurate results.

Underfitting and Overfitting in Machine Learning

Poor performance of a model can often be attributed to underfitting or overfitting:

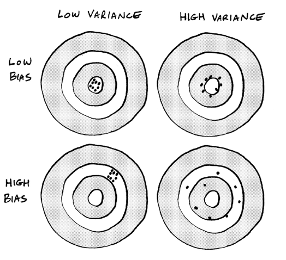

- Underfitting results in high bias — an error that results when the model is too simple for the complexity of the observed data. With underfitting, a simple model enables the machine to learn fast but lacks precision.

- Overfitting results in high variance. This is when a model is too complex. With overfitting, the model tries too hard to account for all the data and thus is overly sensitive to small variations in the data.

The ultimate goal of the tuning process is to minimize bias and variance.

Consider a real-world example. Imagine you work for a website like Zillow that estimates home values based on the values of comparable homes. To keep the model simple, you create a basic regression chart that shows the relationship between the location of a house, its square footage, and its price. Your chart shows that big houses in nice areas have higher values. This model benefits from being intuitive. You would think that a big house in a nice area is more expensive than a small house in a rundown neighborhood. The model is also easy to visualize.

Unfortunately, this model isn't very flexible. A big house could be poorly maintained. It might have a lousy floor plan or be built on a floodplain. These factors would impact the home's value but they wouldn't be considered in the model. Because it’s not accounting for enough data, this model is likely to make inaccurate predictions; it suffers from underfitting, resulting in high bias.

To reduce the bias, you add complexity to the model in the form of additional predictors:

- Nice view

- Updated kitchen

- Walkable neighborhood

As you add predictors, the machine makes the model more flexible, but also more complex and difficult to manage. You solved the bias problem, but now the model has too much variance due to overfitting. As a result, the machine's predictions are off the mark for too many homes in the area.

Increasing Signal and Reducing Noise

To avoid underfitting and overfitting, you want to capture more signal and less noise:

- Signal is the collective term used to describe predictors that drive accurate predictions and classifications.

- Noise is irrelevant data or randomness in a dataset that reduces the accuracy of predictions or classifications.

In our Zillow example, you can capture more signal by choosing better predictors, such as number of bedrooms, number of bathrooms, quality of the school system, and so on, while eliminating less useful predictors, such as attic or basement storage. You really would need to examine the data closely to determine the factors that truly impact a home's value. In short, as the human developer, you would really need to put some careful thought into it.

Frequently Asked Questions

What is model fitting in data science?

Model fitting in data science refers to the process of adjusting a statistical model so that it best fits the data.

This involves finding the set of parameters that minimize the error between the model's predictions and the actual data points.

Why is model fitting important?

Model fitting is important because it determines how well a machine learning model can make predictions based on the data.

What does “parameter” mean in the context of model fitting?

In the context of model fitting, a parameter is a variable that can be adjusted during the fitting process. The goal is to find the set of parameters that allow the model to closely match the original data.

How do you determine the best fit for a model?

The best fit for a model is determined by minimizing the error between the model's predictions and the actual data points.

This is often achieved through techniques like linear regression, where a straight line that best fits the data points is found.

Can a model fit the data too closely? What is overfitting?

Yes, a model can fit the data closely. This is overfitting. It happens when the model captures the noise along with the underlying pattern of the data.

How is the variability in data handled during model fitting?

Variability in data is handled by adjusting the model parameters to best capture the overall trend rather than the individual data points.

This makes the model more reliable in its predictions even if there is some noise or variability in the data.

This is my weekly newsletter that I call The Deep End because I want to go deeper than results you’ll see from searches or LLMs. Each week I’ll go deep to explain a topic that’s relevant to people who work with technology. I’ll be posting about artificial intelligence, data science, and ethics.

This newsletter is 100% human written 💪 (* aside from a quick run through grammar and spell check).

More sources

- https://www.institutedata.com/blog/model-fitting-in-data-science/

- https://h2o.ai/wiki/model-fitting/

- https://www.geeksforgeeks.org/underfitting-and-overfitting-in-machine-learning/

- https://www.javatpoint.com/overfitting-and-underfitting-in-machine-learning

- https://www.jeremyjordan.me/evaluating-a-machine-learning-model/

- https://towardsdatascience.com/various-ways-to-evaluate-a-machine-learning-models-performance-230449055f15